Here’s what I’ve learned from watching teams succeed and fail at this.

What We’ll Cover

How large language models actually work

Let me skip the typical explanation about neural networks and billions of parameters. Here’s what matters for building features.

Large language models predict the next word in a sequence based on patterns learned from massive text datasets. That simple mechanism, scaled up with transformer architecture and billions of parameters, creates something that feels like understanding.

The model doesn’t “know” things the way humans do. It recognizes statistical patterns in how words relate to each other. But those patterns are so complex and nuanced that the output often demonstrates reasoning, creativity, and contextual understanding that’s genuinely useful.

What this means practically: the quality of what you get from an LLM depends heavily on how you ask. Prompt engineering isn’t optional. It’s the primary interface for controlling model behavior.

A vague prompt gets vague results. A specific, well-structured prompt that provides context, constraints, and examples gets much better output. The difference between “write a product description” and “write a 50-word product description for our e-commerce site targeting busy professionals, emphasizing time-saving benefits, in an approachable but professional tone” is enormous.

Model size matters too, but not in obvious ways. Bigger models aren’t always better for your specific use case. They’re more capable at complex reasoning and nuanced tasks but slower and more expensive to run. Smaller models respond faster and cost less but might struggle with tasks requiring deep contextual understanding.

Choosing the right model means matching capability to actual requirements. Don’t use GPT-4 for simple classification tasks that GPT-3.5 handles fine at a fraction of the cost. Don’t use a small model for complex analysis where quality matters more than speed.

Where LLMs add genuine value

The use cases that work share common characteristics: they involve processing or generating natural language at scale, they benefit from contextual understanding, and they’re tasks humans can do but would take much longer.

Customer support that doesn’t feel robotic

AI chatbots powered by LLMs handle nuanced conversations that rule-based systems can’t manage. They understand when customers are frustrated, adapt their tone accordingly, and maintain context across multi-turn exchanges.

The key difference from older chatbots: LLMs can handle unexpected questions gracefully instead of failing when users deviate from predicted paths. They don’t solve every support issue, but they handle routine inquiries well enough that human agents focus on complex problems requiring empathy or judgment.

Content creation at scale

Generating product descriptions, email campaigns, social media posts, and blog drafts becomes dramatically faster with LLMs. These AI-powered features don’t replace human creativity, but they handle the routine content production that buries marketing teams.

I’ve seen e-commerce companies use LLMs to write unique descriptions for thousands of products that would otherwise get generic copy-paste text. The results aren’t Pulitzer-worthy, but they’re good enough for product pages and vastly better than nothing.

The pattern that works: use LLMs for first drafts, have humans refine and approve. This division of labor leverages what each does best.

Developer assistance and code generation

LLMs that write code, explain functions, suggest fixes, and debug errors have become legitimate productivity tools for developers. They don’t replace programming skill, but they accelerate implementation of routine tasks.

Writing boilerplate code, converting between languages, explaining complex codebases, these tasks that used to consume hours now take minutes. The output requires review and often needs adjustment, but starting from 80% correct beats starting from scratch.

Document analysis and information extraction

Processing unstructured text to extract structured information is where LLMs excel. Reading contracts to pull out key terms, analyzing research papers to summarize findings, reviewing legal documents to identify relevant clauses.

Organizations that previously required humans to manually review thousands of documents can now automate much of that work. The LLM processes documents in seconds, extracts relevant information, and flags items needing human attention.

Accuracy isn’t perfect, but for many use cases, 95% accuracy at machine speed beats 100% accuracy at human speed.

Making smart architecture decisions

The technical choices you make early determine whether your LLM features scale well or become expensive maintenance burdens.

API versus self-hosting

Using cloud APIs from OpenAI, Anthropic, or Google is the easiest path. You get managed infrastructure, continuous model improvements, and no operational overhead. The downsides: ongoing per-use costs, dependency on external providers, and limited control over data handling.

Self-hosting gives you control but demands expertise. You need people who understand model deployment, hardware requirements, and operational challenges. For most organizations starting out, APIs make more sense. Self-hosting becomes attractive at scale or when data privacy requirements prevent using external services.

There’s no universal right answer. I’ve seen companies succeed with both approaches. What matters is matching the choice to your specific constraints around budget, scale, data sensitivity, and technical capability.

Prompt management systems

Hardcoding prompts in your application code is a mistake I see constantly. Prompts need frequent iteration based on results. Burying them in code means every change requires a deployment.

Treat prompts like configuration. Store them separately, version them, enable A/B testing, allow non-technical team members to refine them. This flexibility dramatically accelerates improvement cycles.

Good prompt management also enables monitoring which prompts perform well and which don’t, helping you optimize based on actual usage rather than guesses.

Retrieval-augmented generation

RAG patterns extend LLM capabilities by combining them with your own knowledge base. Instead of relying only on the model’s training data, you retrieve relevant documents first, then include them in the prompt for context.

This architecture solves several problems. It reduces hallucinations because the model grounds responses in provided documents rather than its memory. It lets you incorporate proprietary or current information beyond the model’s training cutoff. It enables citing sources for verifiability.

The trade-off is added complexity. You need a vector database for semantic search, retrieval logic to find relevant documents, and careful prompt engineering to use retrieved context effectively. But for many applications, especially those requiring accuracy and citations, RAG is essential.

Caching strategies

LLM API calls are expensive compared to traditional compute. Smart caching can cut costs dramatically.

Exact caching is straightforward: identical prompts get cached responses. This works well for common queries that don’t change.

Semantic caching is more sophisticated: recognizing when different phrasings ask essentially the same question and returning cached responses even for novel wording. This requires additional infrastructure but maximizes cache hit rates with natural language variation.

The complexity versus cost savings calculation depends on your traffic patterns. High-volume applications with repeated queries benefit enormously from caching. Low-volume applications might not justify the infrastructure.

Ensuring reliable output quality

LLMs are powerful but unpredictable. Production deployments require quality controls that demos skip.

Output validation frameworks

Never trust LLM output blindly. Implement validation that checks responses against defined criteria before showing them to users.

This might mean verifying the format matches expectations, checking for prohibited content, confirming factual claims against known data, or ensuring the response addresses the actual question. Validation complexity scales with risk. Low-stakes applications might just check basic formatting. High-stakes applications require comprehensive checks.

Human-in-the-loop workflows

For domains where errors have serious consequences, medical advice, legal guidance, financial recommendations, have experts review LLM outputs before delivery.

This hybrid approach leverages AI efficiency for initial drafts while maintaining human oversight where it matters. It’s slower than full automation but much safer for critical applications.

Fine-tuning for specific domains

General-purpose LLMs work well for many tasks, but fine-tuning on domain-specific data creates models that better match your needs.

Fine-tuning requires curated training datasets, computational resources, and ongoing evaluation. It’s not something to do casually. But for organizations with specialized requirements and sufficient data, fine-tuned models can significantly outperform general alternatives.

The challenge is avoiding overfitting while maintaining the model’s general capabilities. It’s easy to create a model that’s great at your specific task but loses broader understanding.

Monitoring and observability

Track how your LLM features perform in production. Response latency, token consumption, error rates, user satisfaction. These metrics reveal problems before they become crises.

Log conversations for analysis. Which prompts consistently produce poor results? Where do users abandon conversations? What patterns emerge in successful versus failed interactions?

This observability enables continuous improvement. You’re not guessing about what works, you’re seeing it in actual usage data.

Ready to build AI-powered features?

At Vofox Solutions, we help organizations implement large language models successfully, from strategy and architecture through deployment and optimization. Our AI/ML development expertise ensures your LLM features deliver reliable value in production.

Let’s discuss your AI integration challenges. Contact Vofox to explore how we can help you build features that actually work.

Avoiding expensive mistakes

The pitfalls that kill LLM projects are predictable. Here’s what actually goes wrong.

Costs that spiral out of control

Token-based pricing means verbose outputs and inefficient prompts directly hit your budget. A prompt that generates 1,000 tokens instead of 200 costs five times more, multiplied across thousands of requests.

Controlling costs requires prompt optimization for conciseness, smart caching to reduce redundant calls, appropriate model selection for different task complexities, and monitoring to catch expensive patterns early.

I’ve seen companies burn through budgets testing in production with expensive models when cheaper alternatives would work fine. Test with small models first, upgrade only when necessary.

Latency that ruins user experience

LLMs aren’t instant. Generation takes time proportional to output length and model size. An 8-second response feels broken in a chat interface expecting near-immediate replies.

Managing latency means streaming responses token-by-token so users see progressive output, preprocessing context to minimize per-request computation, setting clear expectations through loading indicators, and using faster models for latency-sensitive features.

Sometimes accepting slightly lower quality from a faster model beats perfect responses that take too long.

Security and privacy concerns

Sending data to third-party APIs potentially exposes confidential information. What you put in prompts goes to external servers. For sensitive applications, that’s unacceptable.

Solutions include data anonymization before sending to APIs, using private deployments for sensitive data, implementing clear data handling policies, and validating inputs to prevent prompt injection attacks where malicious users try to override your instructions.

Security can’t be an afterthought with LLMs. Build it into your architecture from the start.

Bias and fairness issues

LLMs inherit biases from their training data. Your AI features might inadvertently perpetuate stereotypes, exclude certain groups, or produce culturally insensitive content.

Addressing this requires regularly auditing outputs across diverse scenarios, incorporating feedback from affected communities, implementing bias detection in your validation framework, and being transparent about limitations.

There’s no perfect solution. But acknowledging the problem and actively working to mitigate it matters more than pretending your AI is neutral.

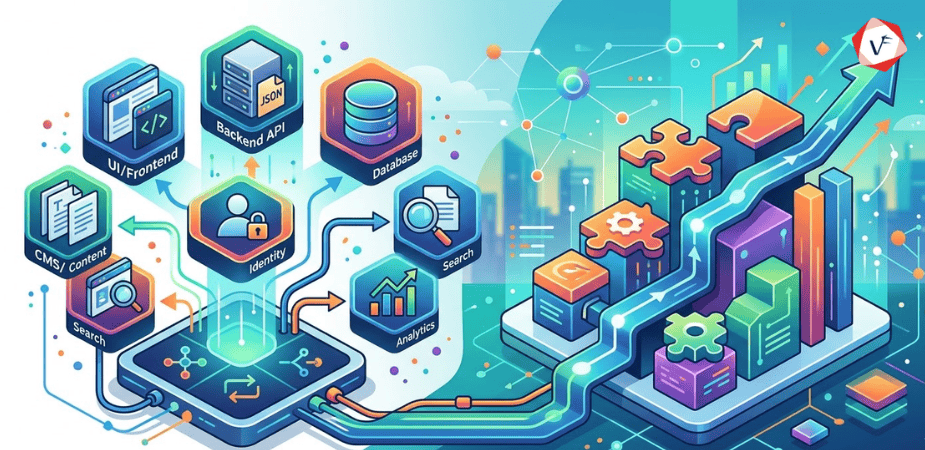

Integrating with existing systems

LLMs rarely work in isolation. They need to interact with your databases, APIs, authentication systems, and business logic.

API orchestration

Complex workflows often require coordinating multiple API calls, transforming data between systems, implementing retry logic, and handling errors gracefully.

Orchestration layers abstract this complexity, presenting clean interfaces to developers building features while managing the messy details of service coordination.

Database integration

Connecting LLMs to structured data creates powerful analytics interfaces. Users ask questions in natural language, the system converts them to database queries, retrieves relevant records, and formats results into coherent responses.

This democratizes data access. Non-technical users can extract insights without learning SQL or BI tools. The LLM handles the translation between natural language and structured queries.

The challenge is ensuring accuracy. Natural language is ambiguous. Database schemas are precise. The translation isn’t always straightforward, and errors in query generation can return misleading results.

Authentication and authorization

LLM features need to respect existing security boundaries. They should only access information users have permission to view and generate content appropriate for their roles.

Implementing context-aware permission checks and filtering retrieved data based on access controls maintains security while providing personalized experiences.

Event-driven architectures

Decoupling LLM processing from synchronous request-response patterns improves system resilience. Queue systems handle burst traffic, background processing manages long-running tasks, webhooks notify when results are ready.

These patterns enable building responsive applications even when LLM processing takes significant time.

Preparing for continued advancement

LLMs are evolving fast. Models improve constantly, new capabilities emerge, architectures change.

Building for maintainability

Design systems that accommodate model upgrades without requiring complete rewrites. Abstraction layers that isolate model-specific code from business logic let you swap providers or versions with minimal disruption.

This flexibility matters because today’s best model won’t be tomorrow’s best model. You want to upgrade when better options appear, not be locked into outdated technology because migration is too expensive.

Multimodal capabilities

LLMs are expanding beyond text to include images, audio, and video understanding. Future features will process and generate content across multiple formats seamlessly.

Preparing for multimodal means designing architectures flexible enough to handle different input and output types without fundamental restructuring.

Staying current with the ecosystem

Tools, libraries, and frameworks for LLM development are improving constantly. Staying informed about new releases, evaluating emerging patterns, and participating in developer communities helps you build more efficiently.

The investment in learning pays off. Techniques that seemed cutting-edge six months ago might now be solved problems with established solutions.

Common questions about building with LLMs

What are large language models and how do they work?

Large language models are AI systems trained on massive amounts of text data to understand context and generate human-like responses. They use transformer architectures with billions of parameters to recognize patterns in how language works, enabling them to understand intent, maintain context, and perform reasoning tasks. Unlike simple keyword matching, LLMs comprehend nuance in natural language, which makes them powerful for building intelligent features. They work by predicting likely next words based on patterns learned during training.

Should I use an API or host my own LLM?

Cloud APIs like OpenAI, Anthropic, or Google offer simplicity, managed infrastructure, and quick setup but create dependencies on external providers with ongoing per-use costs. Self-hosted deployments provide greater control over data privacy, potentially lower long-term costs at scale, but require specialized expertise, significant hardware investment, and operational overhead. Choose based on your data sensitivity requirements, expected scale, budget constraints, and technical capability. Most organizations starting out benefit from APIs, moving to self-hosting only when scale or privacy requirements justify the complexity.

How much does it cost to build features with LLMs?

Costs vary widely based on usage volume, model choice, and implementation approach. API-based solutions typically charge per token processed, with costs ranging from fractions of a cent to several cents per request depending on model size and provider. A feature handling 10,000 requests daily might cost anywhere from $50 to $500 monthly. Efficient prompt engineering, smart caching, appropriate model selection for different tasks, and monitoring help control expenses. Budget for both API costs and development time for integration, quality control, and ongoing optimization.

How do I prevent LLM hallucinations?

Hallucinations, where models confidently present incorrect information, can’t be completely eliminated but can be reduced through several strategies. Use retrieval-augmented generation to ground responses in provided documents, implement output validation that checks facts against known data, add human review for high-stakes applications, fine-tune models on accurate domain-specific datasets, use prompts that encourage citing sources, and clearly communicate limitations to users. The appropriate approach depends on your specific use case and acceptable error rates.

What’s prompt engineering and why does it matter?

Prompt engineering is crafting instructions that guide LLMs toward desired outputs. It matters because the quality of results depends heavily on how you ask. Vague prompts get vague results. Well-structured prompts that provide context, constraints, examples, and clear formatting instructions get much better output. Effective prompt engineering can mean the difference between a feature that barely works and one that delivers reliable value. It’s the primary interface for controlling model behavior without fine-tuning.

How long does it take to build AI features with LLMs?

Simple integrations, like adding a basic chatbot to a website, can be built in days. Production-ready features with proper error handling, quality controls, monitoring, and integration with existing systems typically take weeks to months. The timeline depends on complexity, quality requirements, team expertise, and how much infrastructure you’re building versus using existing tools. Plan for iterative development where you refine prompts, adjust architecture, and optimize based on actual usage patterns rather than getting everything perfect upfront.

Can LLMs replace human workers?

LLMs augment human capabilities more than they replace them. They excel at tasks that are routine, high-volume, and follow predictable patterns but struggle with tasks requiring genuine creativity, emotional intelligence, complex judgment, or accountability. The pattern that works best: LLMs handle first drafts, routine processing, and high-volume tasks while humans provide oversight, handle exceptions, make critical decisions, and refine outputs. This division of labor leverages what each does best rather than expecting LLMs to fully replace human expertise.

What’s the difference between fine-tuning and prompt engineering?

Prompt engineering adjusts the inputs you give to an LLM to control its behavior without changing the model itself. It’s faster, cheaper, and more flexible but has limits on how much you can change behavior. Fine-tuning involves training the model further on your own dataset to adapt it specifically to your domain, writing style, or tasks. It’s more expensive and time-consuming but can create models that significantly outperform general-purpose alternatives for specialized applications. Most use cases start with prompt engineering, moving to fine-tuning only when necessary.

Making it work in practice

Building AI-powered features with large language models isn’t about chasing the latest technology. It’s about solving real problems with tools that are finally capable enough to deliver value.

The gap between demo and production is real. Features that impress in controlled tests often struggle with the messiness of actual usage. Users ask unexpected questions. Edge cases emerge constantly. Costs and latency matter more in production than in prototypes.

Success comes from treating LLMs as powerful but imperfect tools that need careful integration, ongoing monitoring, and realistic expectations. They’re not magic. They’re sophisticated pattern matching at scale.

The organizations seeing real value focus on use cases where LLM strengths align with actual needs. They invest in proper architecture instead of rushing to production. They implement quality controls appropriate to their risk tolerance. They monitor and optimize based on real usage data.

Most importantly, they recognize that building AI features is iterative. Your first prompts won’t be optimal. Your initial architecture will need refinement. Usage patterns will surprise you. Plan for continuous improvement rather than expecting to get everything right upfront.

The technology keeps advancing. Models get better, costs decrease, capabilities expand. What seems impossible today might be routine in six months. But the fundamentals remain: understand your use case, choose appropriate tools, implement quality controls, monitor results, iterate based on feedback.

If you’re considering LLM integration, start small. Pick one feature that would deliver clear value. Build it properly. Learn from real usage. Then expand based on what works rather than trying to transform everything simultaneously.

The opportunity is genuine. LLMs enable features that genuinely weren’t possible before. But realizing that opportunity requires moving past the hype to focus on what actually works in production.

That’s harder than demos make it look, and more valuable than most organizations realize.